Netflix’s hit series The Queen’s Gambit tells the story of a young woman who – spoiler warning – rises to the top of the chess world, beating all her opponents in the end. To do so, she needs to analyse past games, learning the different openings and moves.

The chess world was shaken in the late 90s when the news broke of AI programs becoming unbeatable opponents. More recently, the same happened in the world of Go. Growing data processing capacity and sophisticated programming challenge the human ability to stay on top of such complex games. The advance of AI has made many people ask half seriously whether humans will even be needed in the future.

The insurance sector has always made use of all kinds of information technologies and data. In the old world, information was imprecise and expensive, and its processing was slow and likewise expensive. These deficiencies set limitations to how efficiently the risk-sharing principle of insurance could be implemented.

The fast development of information technology has changed and continues to change the situation. The volume and processing speed of digital data is now growing exponentially while the costs are falling. This high-speed progress will likely reach results that break all expectations.

Technological progress offers significant opportunities for better and more efficient insurance business. Hopefully this will also expand the range and variety of affordable insurance cover. Improved accuracy does not remove the need for insurance, however: predictions may be decisively more accurate than before, but the risk event itself still occurs fully at random.

======

Progress offers significant opportunities for

better and more efficient insurance business.

Hopefully this will also expand the range and

variety of affordable insurance cover.

======

Progress is never free of risk. The models that are applied may prove out to be deficient. Before its flaws are recognised, a deficient model may reach widespread use due to the speed and efficiency of technology.

Analytics may also cause problems concerning the fairness of data use. Basically good intentions may still result in situations in which the insurance analytics leads to discrimination. The insurance sector must uphold high ethical principles in order to responsibly utilise the potential of data technology for the benefit of its customers as well as to weed out any potential discriminatory features.

Could AI be entrusted with the task of this ethical oversight? I doubt it, at least for the foreseeable future.

You see, ethics is a very challenging domain. If we wanted to implement automation today, we would first need unambiguous ethical principles to stick to. Several such systems have been developed in the course of history: for example, Kant’s categorical imperative and Bentham’s utilitarianism, to name some notable ones. These kinds of models, however, will lead to even internally contradicting situations. They are also not commensurate with each other. It is possible that as AI development continues, we will come across better ways to ensure ethical conduct in the solutions offered by technology, but at the moment, there are no rules that would guarantee the successful automation of ethics.

We began with The Queen’s Gambit because human achievements in chess continue to inspire respect even as at the same time, AI surpasses the human ability at chess and any similar game with predictable rules that can be analysed. AI will prevail as long as the players are prohibited from moving outside of the board or switching their knights for kangaroos. It can calculate through every possible option faster than its human opponent and pick the winning strategies.

But what if the rules were to change in the middle of the game? What happens when a black swan enters the game board – a piece that wasn’t even supposed to exist?

In insurance, the black swan interferes with the game by way of ethical problems. Any problem is uncomplicated as long as the rules are clear, and every case can be unambiguously classified; such problems can be easily solved by artificial intelligence. Even cases that break the closely ruled boundaries can be dealt with by utilising machine learning.

Data ethics reveals many black swans. For now, the player is left to face them without the support of a perfect AI – ethics cannot be programmed, because ethical decisions require human-like thinking. In the pursuit of ethical use of AI and data, we constantly run into new situations in which the casuistic operating models are insufficient.

Fairness is the cornerstone of ethical operations as we seek to balance the interests of the individual customer on one side, and the insurance collective on the other. Fairness is defined by regulatory requirements and the various contracts and recommendations based on which activities can be evaluated. The pursuit of fairness does not inspire confidence unless the whole sector adheres to transparency and clarity. These elements enable the evaluation of activities, and the insurance sector can then engage in dialogue for improved fairness with the other stakeholders.

Looking for more?

Other articles on the topic

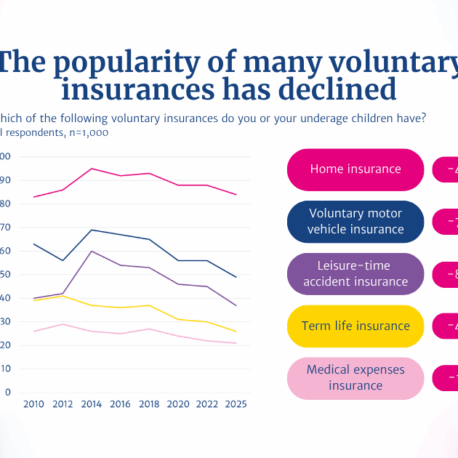

2025 Insurance Survey: Finns have fewer voluntary insurance policies than before

Finns are happy with their insurances – 86% consider compensations proportionate to the suffered loss, and claims are rarely rejected

Customers are already protected if an insurance company fails – An EU-wide insurance guarantee scheme is not needed

Financial sector’s VAT treatment needs an overhaul, but the Finnish pension system must not be hampered with added tax burden